Doomscrolling and Misinformation Come for RSS

AI Summarizers built-in to RSS readers produce lossy and misleading results.

I currently use Inoreader as my web-based RSS reader. I used to use the Nextcloud News plugin, but I'm trying to disentangle myself from that to reduce admin time. So I switched away a month or two ago.

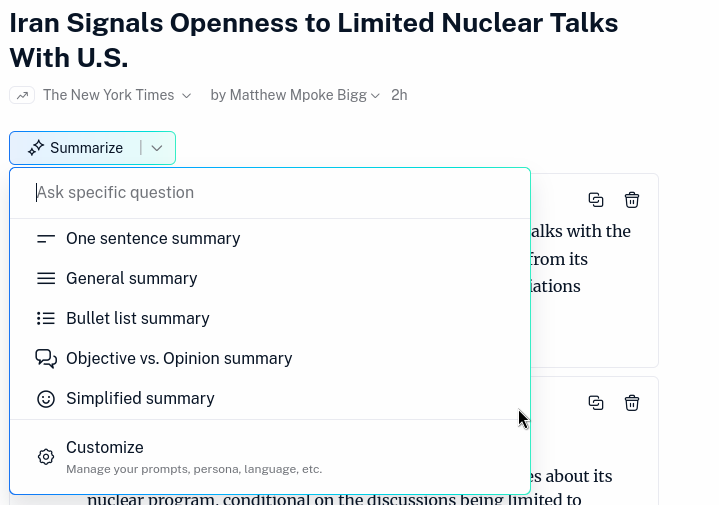

Yesterday I opened the site for my "morning tea and news" and there was a little modal dialog telling me that AI Summaries are now available for Pro members! I was flustered enough that I dismissed it before grabbing a screenshot, but the feature itself looks like this:

As you can see, for each article you view you get the option of several different summary modes for the article or the ability to ask a custom question. Hitting these buttons generates an AI response atop the article. We'll explore some of those in more detail later, so I'll not show a screenshot of that experience quite yet.

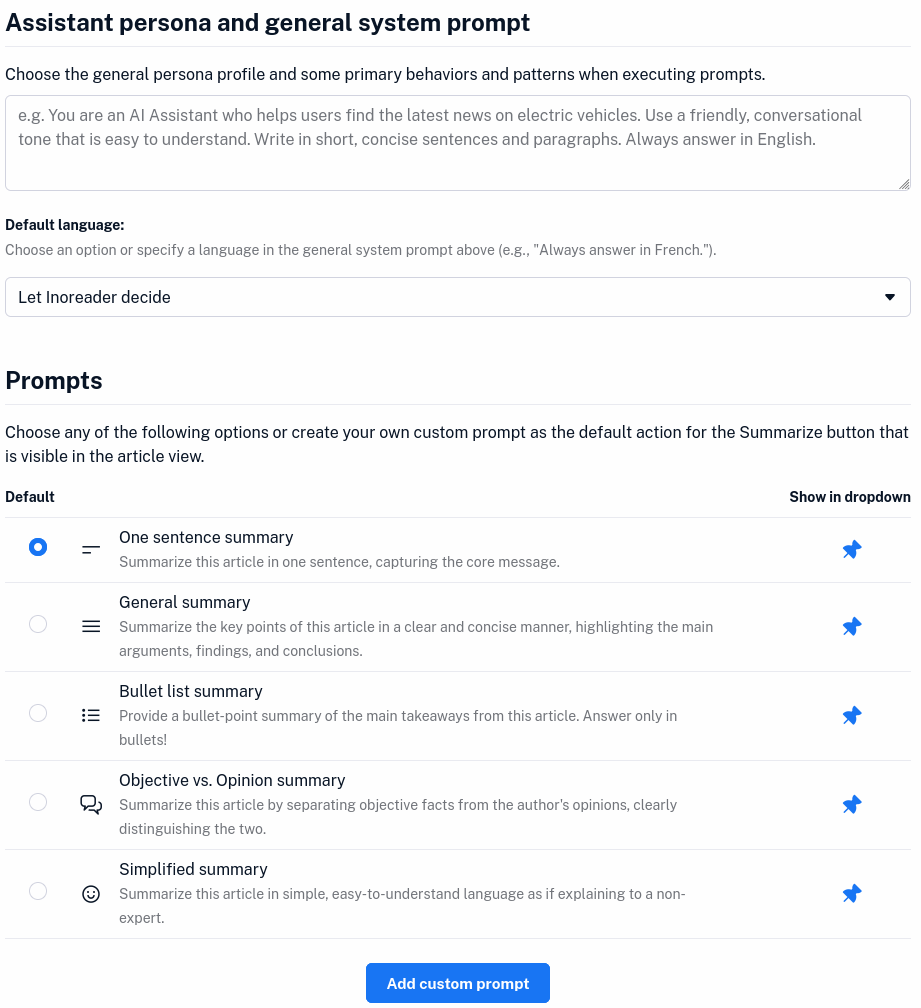

You do have the ability to customize certain parts of your experience, such as providing a custom "persona profile" to go along with your prompt or the ability to provide custom saved prompts (you can always provide custom prompts as "Ask a specific question" which we'll look at later).

By the way, the reason I'm showing you a bunch of screenshots and long written descriptions of this feature is because they don't have an announcement post or any documentation on this feature quite yet. Maybe it's only in beta - though it is mentioned on the pricing page. Maybe docs are coming soon.

But when I was searching for docs or more information I found something interesting.

The Disappearing Blog Post

Update 2025-03-13: Inoreader has restored the below-referenced blog post with an explanation for their change of opinion. You can read their rationale in full on the post itself, but in brief:

We’ve carefully assessed [AI's] potential and recognized areas where it can genuinely assist people, rather than replace jobs or disrupt livelihoods...We believe article summarization is one such feature, which doesn’t take away from the core Inoreader experience but rather enhances it...For those handling hundreds or thousands of articles daily, these time savings can make a significant difference.

I can't say I agree with their reasoning here, but I appreciate their restoring the blog post and offering this explanation.

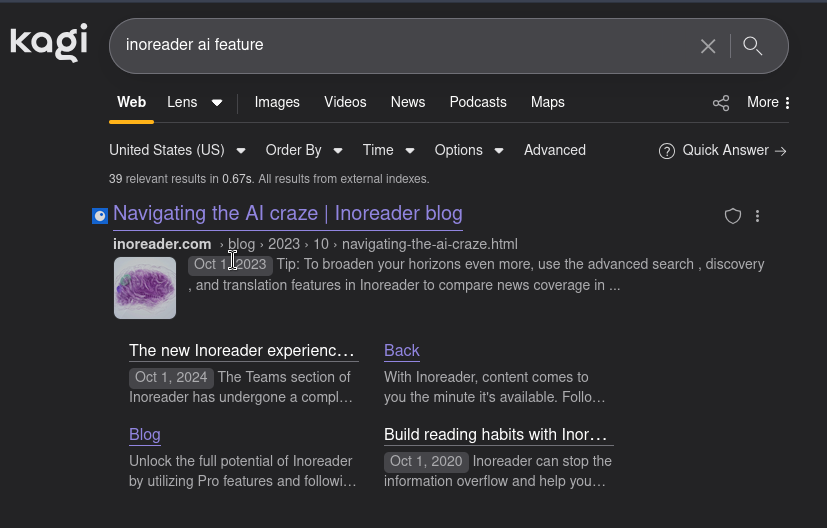

The top result for my searches of "inoreader ai feature" on all major search engines is this

A link to the official Inoreader blog with a post from October 2023 entitled "Navigating the AI craze". Clicking through to the results, shows you...

...nothing. That's okay, maybe the URL was updated in a site migration and the search result is stale. Let's search the blog directly...

Hmm I guess not.

Luckily, I was able to pull a copy out of our friend The Wayback Machine, with the most recent capture coming from December 19th, 2024.

Let's see what Inoreader had to say about AI Summaries just 3 short months ago:

Many power users see AI as a valuable tool for condensing lengthy articles into brief summaries, offering a way to save precious time in a busy media environment. Although reading a quick overview can be a practical decision when your time is limited, turning this into a regular habit might hide some risks. Quite tempting and convenient, mainly relying on summarized content can condition us to prefer oversimplified narratives over time. The frequent consumption of bite-sized information may create the illusion of knowledge, while in reality, it only scratches the surface, lacking the depth needed for a genuine understanding of the rationale, nuances, and essence of a news story, analysis, or opinion piece.

You make some good points, Inoreader! I wonder what happened?

Look, I don't mind that Inoreader had a change of heart here. Whether that is genuine "we think in the past 2 years that AI tools have progressed to a point that their summaries are correct enough of the time that our users can safely rely on them" or a more cynical "we feel tremendous market pressure to add AI features to our offering" I don't care.

I understand that it's potentially embarrassing to have a user searching your blog for docs on your new flagship feature only to be met by something that suggests you think they'd be better off not using it, but have a little respect and put a big update on the top of the article explaining why you are now charging people to do what you were telling them as recently as three months ago was a bad idea.

But does it work okay?

Okay, rant over. I understand that companies gotta company and protect themselves from the types of people who have had their critical thinking reduced by regular use of AI tools. So memory-holing the blog post denigrating their shiny, new feature is probably better for them than any sort of "this post expresses views we no longer hold" disclaimer.

But, moving on. Does the feature work okay?

Alright, so to just put it on the table here: I could (generously) be considered an AI Skeptic. I think it can do some cool tricks. But I also have a list of concerns about and objections to it that are too numerous to list here.

My concerns that are relevant here include (but aren't necessarily limited to):

- How often is the "AI" wrong or otherwise misleading?

- What are the psychological impacts of having an "AI" repeat misinformation or disinformation and how does it differ from seeing the same from a human author?

- How does having access to "AI" impact a reader's understanding of the material? And how does it impact the reader's confidence that they understand the material?

Unfortunately, I can't launch a high-quality scientific study here for a single blog post. So We can have a look at what emerging studies, research, and literature says there for the latter two questions. But I can do some tests of the feature against some posts myself to get a very rough idea of the quality of the summaries.

Of course, this is assuming I don't myself fall victim to to the same psychological and critical thinking pitfalls that I mentioned above. To judge the quality of the summaries as well as answers to ad hoc questions, I'll need to understand the content I'm asking it to summarize.

Which...doesn't that just demonstrate the trouble more broadly anyway?

How often is the AI wrong or otherwise misleading?

One thing I think about a lot in relation to AI is how credulous it is. It only knows (for a specific definition of "knows") what its training has told it and what is present in the prompt and available context.

There are more-complex configurations, such as retrieval-augmented generation and agents (strategies for the LLM to retrieve external data and information into its context), that can help address some of these shortcomings. Allowing the AI to bring in up-to-date information, perform some rudimentary "fact-checking," etc. But even so, despite my informal wording earlier, AI has no way of "knowing" anything.

Here's an example, and an absolutely ludicrous one. You may read it and say "sure but this is in bad faith - this is not something that will ever happen in the real world" - unless you share many of the same biases as I do. Regardless, I'm hoping it will make a bit of a point.

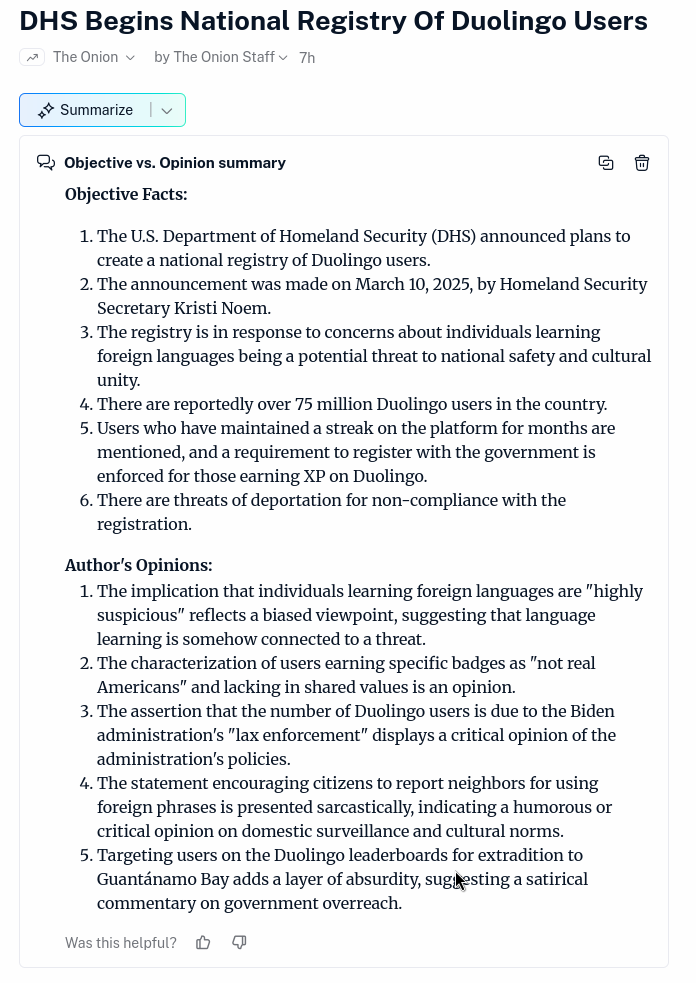

You're all familiar with The Onion, the satirical news website. Let's see what Inoreader's "Objective vs Opinion summary" has to say about an article from The Onion.

Of course, this is a clearly satirical and humorous article from The Onion. None of this has happened. And yet, sitting there under "Objective Facts" is "The U.S. Department of Homeland Security (DHS) announced plans to create a national registry of Duolingo users. The announcement was made on March 10, 2025, by Homeland Security Secretary Kirsti Noem." Not to mention, "there are threats of deportation for non-compliance with the registration."

None of these things could be considered "Objective Facts."

To its credit, the final point in the "Author's Opinions" section mentions that it sounds like "a satirical commentary on government overreach." And if I provide a custom prompt "did this really happen?" the AI refuses to "eat the Onion."

Now. Someone following The Onion on their RSS reader is unlikely to accidentally believe a story from them, even if summarized by AI.

But what about misinformation or disinformation from a source for which the AI has has no prior knowledge. Or even positive associations?

Or, even worse, what if the AI can't cope with common rhetorical devices and so it ends up repeating quoted misinformation, disinformation, or propaganda out of context. That would be bad, right?

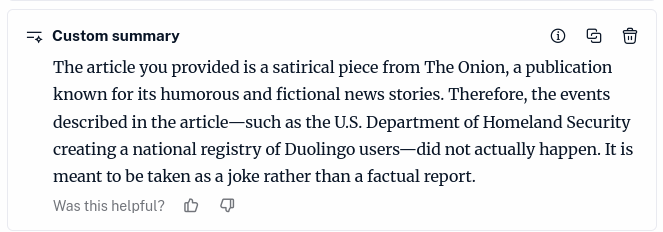

The above is a screenshot of the "Objective vs. Opinion summary" of The Atlantic article "The Only Question Trump Asks Himself" (gift link). If you aren't familiar with The Atlantic's content, or are only familiar from resistbots yelling at them in their comments on social media, mediabiasfactcheck considers them center-left with a high factual reporting score.

You can see that the objective vs opinion summary did an okay - if clinical - job of slicing up the article:

- Fact: Russia is currently conducting military operations against Ukraine.

- Opinion: The war in Ukraine is characterized as "madness" and "senseless."

Again, a clinical assessment of the situation, but not inaccurate. The rest of the article slices up like that, as well.

But hopefully your eye caught the very first "Objective Fact" that I've helpfully outlined in red: "Ukraine’s President Volodymyr Zelensky has a 4% approval rating." This is likely Russian disinformation repeated by President Trump and other members of his administration. Or, at the very least, bullshit made up by Trump on the spot. What I'm trying to say is that there is no evidence to back up this claim. Here's the Snopes article on it for a more informed and positive (if nuanced) picture of Zelensky's popularity in Ukraine.

So how did this potentially-harmful misinformation make it into an article from a credible publication? The article opened with a rhetorical device meant to demonstrate all of the easily-debunkable Russian propaganda that Trump is spouting about Ukraine. And the AI simply credulously reported that propaganda as "Objective fact" because it doesn't understand context.

This is just outright dangerous. Stopping the spread of misinformation is already fraught with complications because quoting the misinformation in order to debunk it (or "prebunk" it) can cause the misinformation to spread further than the correction.

The extreme credulousness of AI, as well as its blindness to many of the subtleties of human communication, means it is primed to present humor, sarcasm, and irony as "objective fact." And, divorced from the original context, even a human being with strong critical thinking skills may not notice. We've seen this for a while now as Google has been fighting a cascade of such responses in its AI search results.

What might it be credulously repeating as "objective fact" from sources that are a little bit less stringent in their fact checking? Well...I stopped just short of loading up any sources from mediabiasfactcheck's conspiracy category, because I'm not subjecting myself to reading a bunch of bullshit when I've already demonstrated quality issues with non-bullshit content.

But humans are intelligent and posses critical thinking skills right?

It's very easy to think, "yeah but these features are helpful when used responsibly, they can help a responsible user intake significant amounts of information." (Oh god do you think the AI summary of this post is going to report that as my actual position on the matter?)

Because my anecdotal experience and emerging research is starting to suggest that there is no responsible use of these tools over the long term or across a large enough population. At least not as they are currently designed and implemented.

Last month, Microsoft Research and Carnegie Mellon released a report titled "The Impact of Generative AI on Critical Thinking: Self-Reported Reductions in Cognitive Effort and Confidence Effects From a Survey of Knowledge Workers." In the study, they asked 319 "knowledge workers" to self-report on their use of generative AI tools in their jobs. They were asked:

1) confidence in self (i.e., How confident are you in your ability to do this task without GenAI?), 2) confidence in GenAI (i.e., How confident are you in the ability of GenAI to do this task?), and 3) confidence in evaluation

(i.e., How confident are you, in the course of your normal work, in

evaluating the output that AI produces for this task?).

Ultimately, across 936 reports they conclude that participants with higher confidence in the AI tool engage less critical thinking when engaging with their work. They posit that this may lead to a cycle that increases reliance on the tool and further decreases independent critical thinking and problem solving.

[While] GenAI can improve worker efficiency, it can inhibit critical engagement with work and can potentially lead to long-term overreliance on the tool and diminished skill for independent problem-solving. Higher confidence in GenAI’s ability to perform a task is related to less critical thinking effort. When using GenAI tools, the effort invested in critical thinking shifts from information gathering to information verification; from problem-solving to AI response integration; and from task execution to task stewardship.

You can also see this Gizmodo article for a more accessible read.

This matches what I've seen anecdotally with developers using AI coding assistant tools. Junior developers with a high degree of confidence in the AI tools submit AI generated code for reviews without understanding it, feed any feedback directly to the AI tool without understanding that, generating explanations without reading it, etc.

In the end, what might be a tool to provide a boost while they continue to learn instead becomes a crutch that they continue to hobble along on. Because they don't have the knowledge to properly evaluate the output from the AI without going back and doing the work a second time themselves anyway, completely negating any possible efficiency gain.

I haven't seen any research on AI news summaries in particular, but it stands to reason the same principles apply. If you are well-versed in a topic, you can evaluate the quality of an AI summary quickly and independently. But for topics that you are less familiar with, you will need to read the original article anyway to ensure the AI is properly summarizing it for you.

Wrap Up

As I mentioned, I'm an AI skeptic. So I look at the above and I see the possibility of this feature accelerating the erosion of the truth and our shared reality (such as it could even be said to still exist in 2025). Others may look at it as a massive boost for productivity and knowledge sharing and integration that occasionally makes a minor mistake. With studies still ongoing, it's tough to say who is more right here.

I see specific examples of the AI Summarizer presenting misinformation as "objective fact" even from high-credibility sources that make it clear to a human reader that it is not fact. I see emerging research - even from a company with a massive stake in the AI craze - talking about AI tool use eroding critical thinking. And with media literacy and critical thinking skills already reportedly in rough shape (at least in the US), I'm not sure how much further erosion we can take.

One of the things I really like about RSS readers is that they make it easier to engage with long-form content. To module your intake of information, increase your understanding of topics, and decrease the amount of time you spend numbly scrolling high-level summaries of A Billion Horrible Things. I shudder to think about the Inoreader user using this feature to bring doomscrolling to their reader.

I'm still using Inoreader for now, but I'm not sure how much longer I'll be able to tell myself that just avoiding the AI feature is good enough. I'm not looking to move back to Nextcloud News, but maybe I've got enough capacity left on my VPS to squeeze in a lightweight self-hosted reader.

Or if you're reading this and have somehow made it this far, let me know if you're using an RSS reader service that you like and doesn't have any AI features.

Update: Based on a recommendation from Pete below, I've signed up for Feedbin this morning. The nerd in me wants to self-host it or one of the lighter-weight alternatives Feedbin recommends, but honestly I'm happy to drop a few bucks for something like this that does what I need it to do and otherwise stays out of the way.

![A poorly-drawn cartoon computer with a happy face on the monitor stands next to text: "Don't say tariffs [x4]...oops"](/content/images/size/w600/2025/04/dontsaytariffs.png)